Showing 1 to 15 of 2076 results

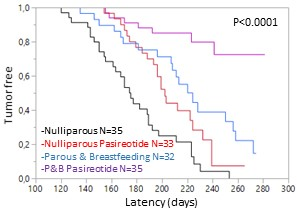

New use of pasireotide-LAR in breast cancer chemoprevention

Patents for licensing CSIC - Consejo Superior de Investigaciones Científicas

Displacement monitoring in civil and industrial work structures

Patents for licensing UNIVERSIDAD DE BURGOS

New material based on manganese oxide with gas generation and high temperature regeneration capacity

Patents for licensing Consejo Superior de Investigaciones Científicas

TWISCO – Digital TWIn for Sustainable Supply Chain in Construction

Innovative Products and Technologies Luxembourg Institute of Science and Technology (LIST)

Industrial production of humic and fulvic acid from biomethan digestate

Innovative Products and Technologies Luxembourg Institute of Science and Technology (LIST)

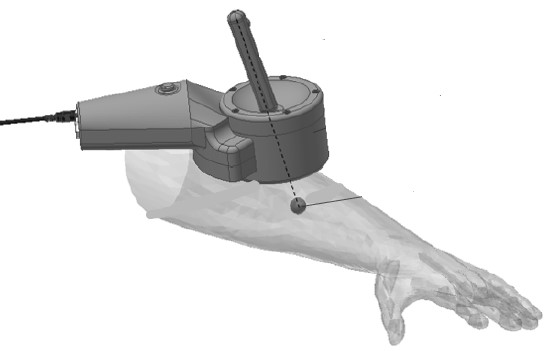

Application of ultrasound in clinical physiotherapy

Patents for licensing Consejo Superior de Investigaciones Científicas

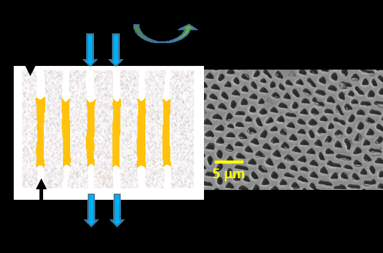

Ceramic membrane with highly aligned pores for CO2 separation

Patents for licensing CSIC - Consejo Superior de Investigaciones Científicas

Casting Magnesium Alloy with Improved Thermal Conductivity

Innovative Products and Technologies Brunel University London

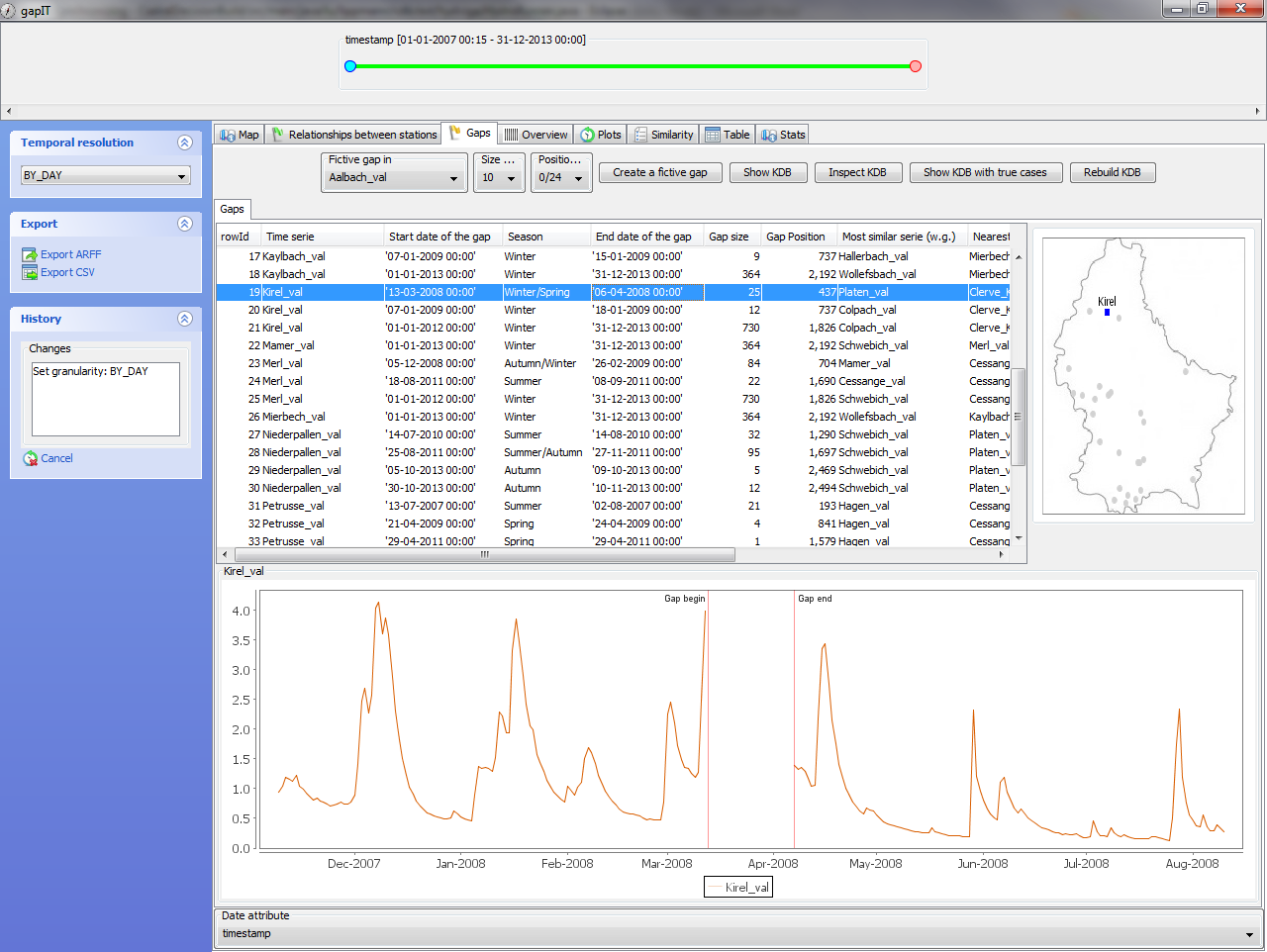

An interactive software tool to deal with missing values (gap filling) in hydrological time series for water management

Innovative Products and Technologies Luxembourg Institute of Science and Technology (LIST)

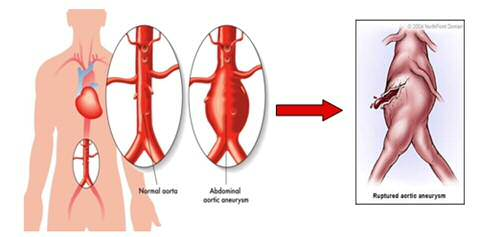

Peptide for the prevention and treatment of aneurysm

Patents for licensing Consejo Superior de Investigaciones Científicas

Hospital emissions: N2O abatement

Innovative Products and Technologies Centre Technology Transfer CITTRU

Screening device with graphene quantum dots and smartphone readout

Patents for licensing Institut Català de Nanociència i Nanotecnologia

Preparation able to produce biopesticide and/or repellent for controlling plant pathogens

Innovative Products and Technologies Luxembourg Institute of Science and Technology (LIST)

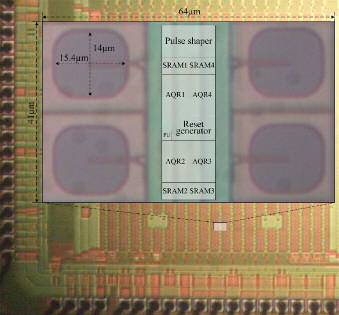

Digital OR Pulse Combining Photomultiplier

Patents for licensing Consejo Superior de Investigaciones Científicas