Showing 1 to 15 of 2075 results

INFINITE FAUNDRY - Production Efficiency Digital-Twin Solutions for Industry

Innovative Products and Technologies EIT Digital

Control method for a neuroprosthetic device for the reduction of pathological

Patents for licensing CSIC - Consejo Superior de Investigaciones Científicas

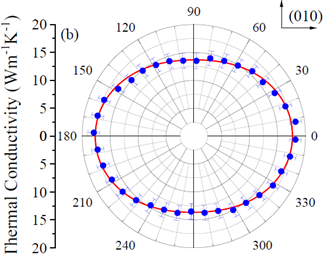

new contactless method for the measurement of thermal conductivity in materials

Patents for licensing ICMAB-CSIC

Weight & gas pressure IoT sensor telemetry solution with machine learning predictive inventory

Innovative Products and Technologies Pulsa

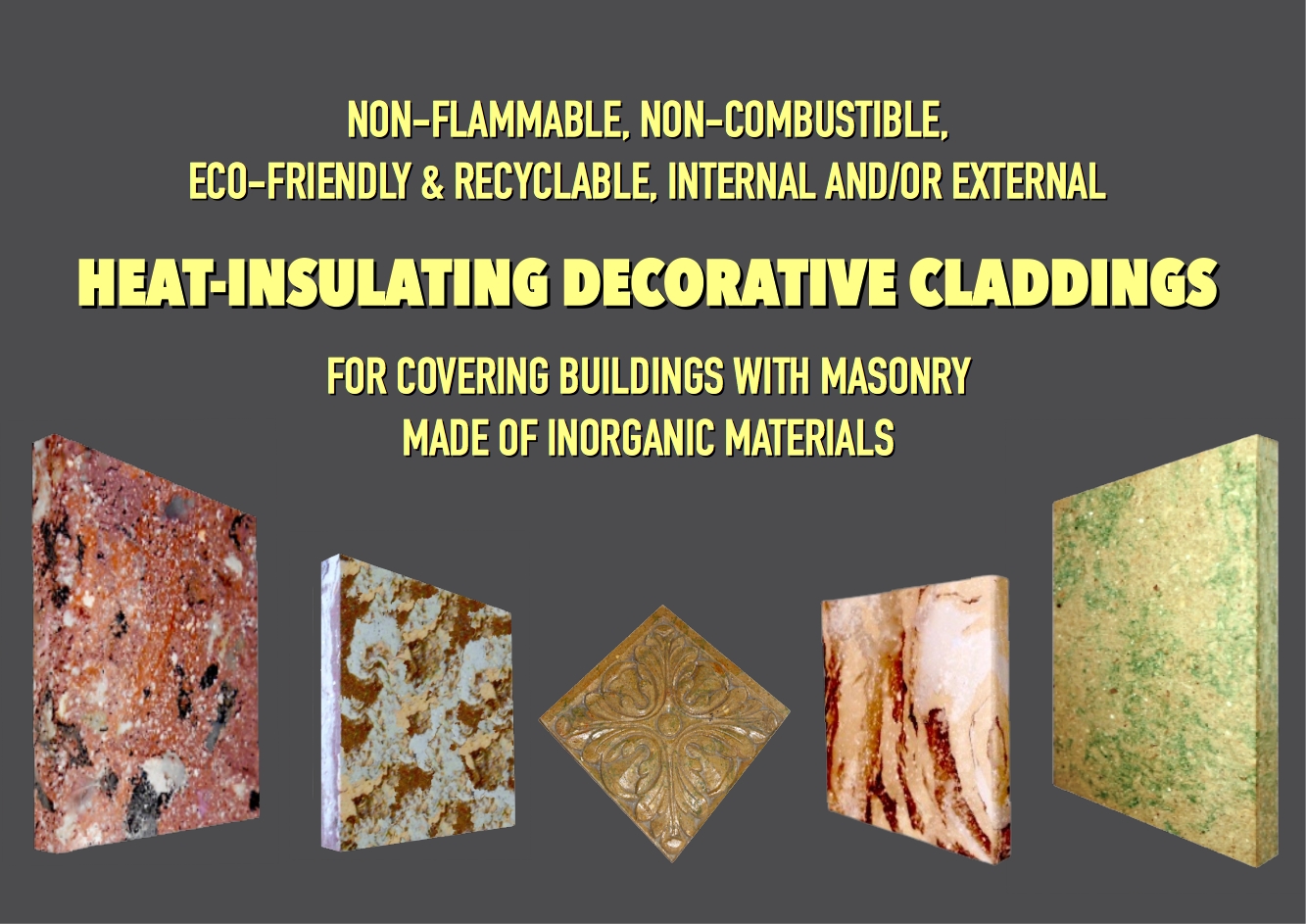

"TRIPLE-R SYNTHETIC WOOD" COMPOSITE MATERIAL

Innovative Products and Technologies ADVANCED PASSIVE TECHNOLOGY

Healthier cigarettes for Heated Tobacco Products (HTPs)

Knowhow and Research output Universidad de Alicante

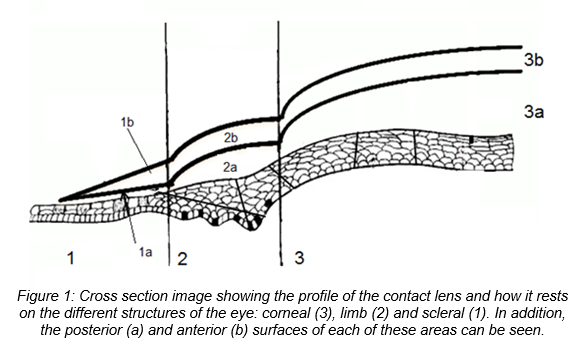

“PresbyCustom”: New customizable contact lenses to correct presbyopia

Patents for licensing UNIVERSIDAD DE ALICANTE

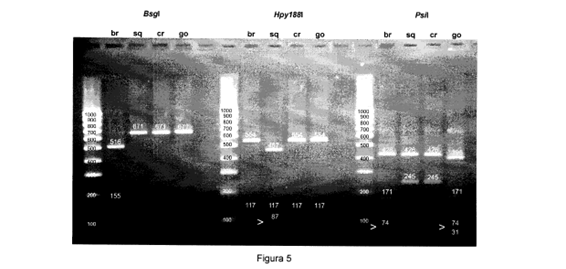

Procedure for the genetic identification of the european spices of the maja genero

Patents for licensing CINBIO

Improvement of power quality in electrical smart grids

Innovative Products and Technologies University of Luxembourg

New methodology for the catalytic reduction of nitroaromatic compounds environmental friendly

Patents for licensing UNIVERSIDAD DE BURGOS

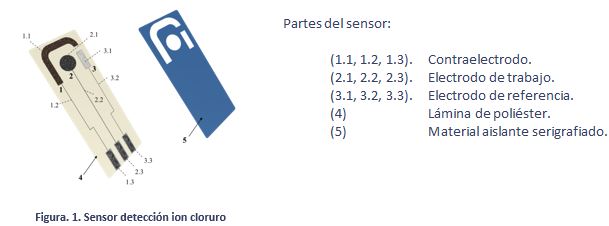

Electrochemical sensor for the “in situ” detection and measurement of chloride ion in fluid samples

Patents for licensing UNIVERSIDAD DE BURGOS

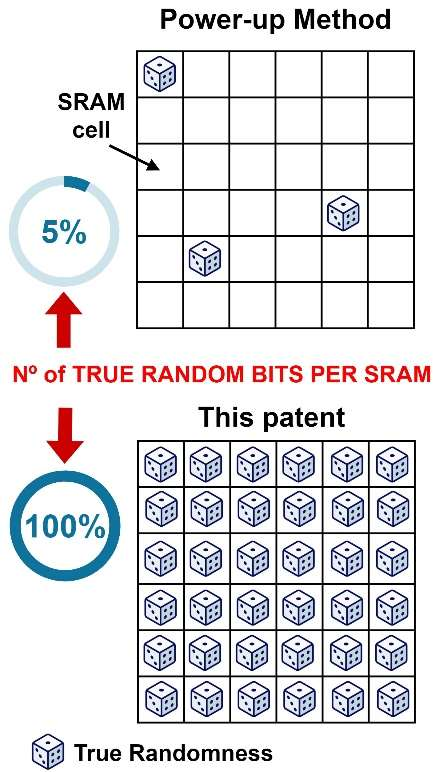

Method and device for generating true random numbers

Patents for licensing CSIC - Consejo Superior de Investigaciones Científicas

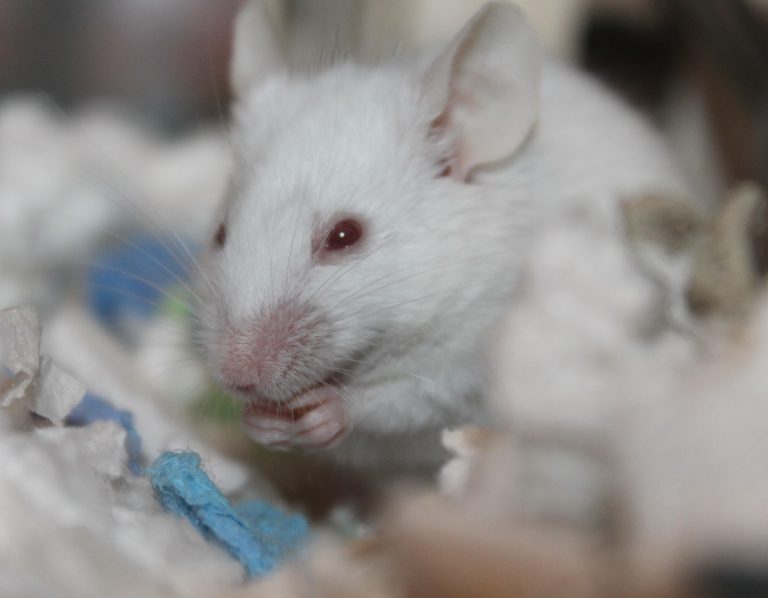

RODENT PLATFORM - Luxembourg Centre for Systems Biomedicine

Research Services and Capabilities University of Luxembourg

Monitoring of Esca viticulture disease symptoms

Innovative Products and Technologies Luxembourg Institute of Science and Technology (LIST)![Continuous-flow model for microbial populations associated with Cystic Fibrosis (CF) & Chronic obstructive pulmonary disease (COPD[…]](https://static3.innoget.com/images/bgpremium.png)